**[Prometheus](https://prometheus.io/):** Scrapes Metrics Server API and application metrics and stores the data in a time-series database for insights into application health and performance.

**[Grafana](https://grafana.com/grafana/):** Provides visualization and alerting capabilities based on Prometheus's time-series database of metrics. | | **Logging** | **[Vector](https://vector.dev/):** A companion agent that efficiently gathers and sends container logs to Loki and other storage locations (S3, SIEM tools, etc), simplifying log monitoring, troubleshooting, and compliance auditing, enhancing the overall observability of the mission environment.

**[Loki](https://grafana.com/docs/loki/latest/):** A log aggregation system that allows users to store, search, and analyze logs across their applications. | | **Security and Compliance** | **[Falco](https://falco.org/):** Provides real-time threat detection and security monitoring for cloud-native environments.

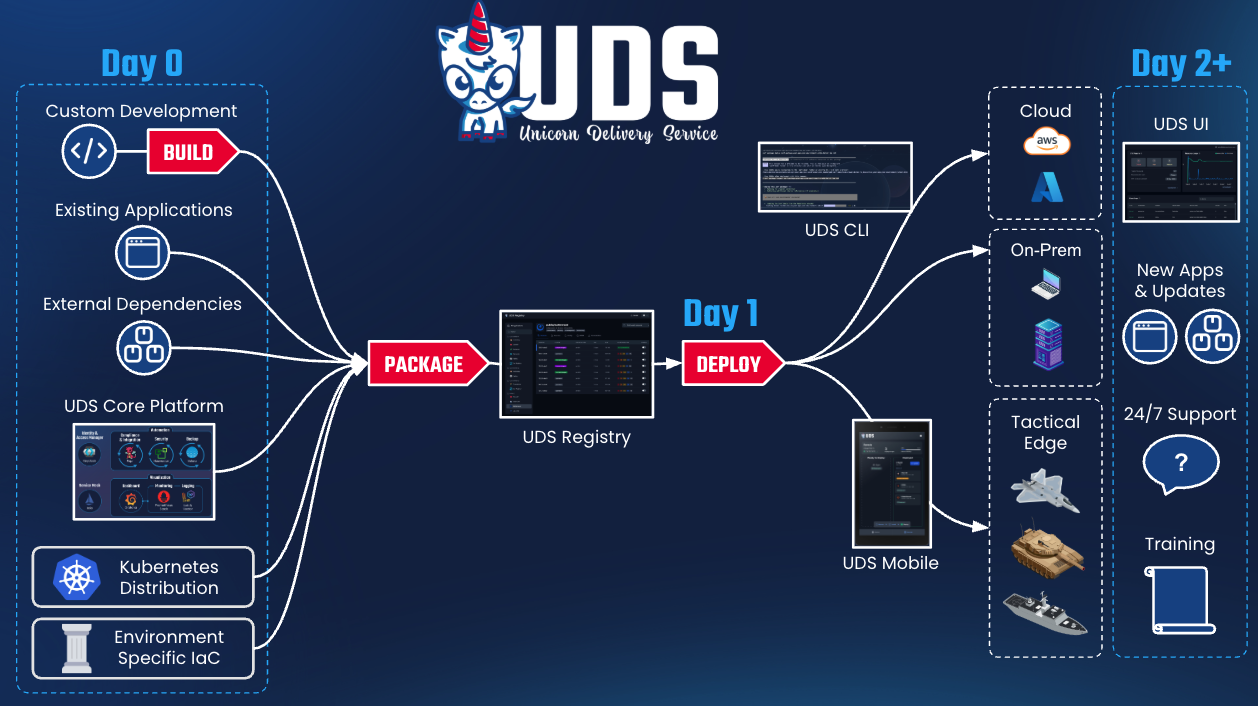

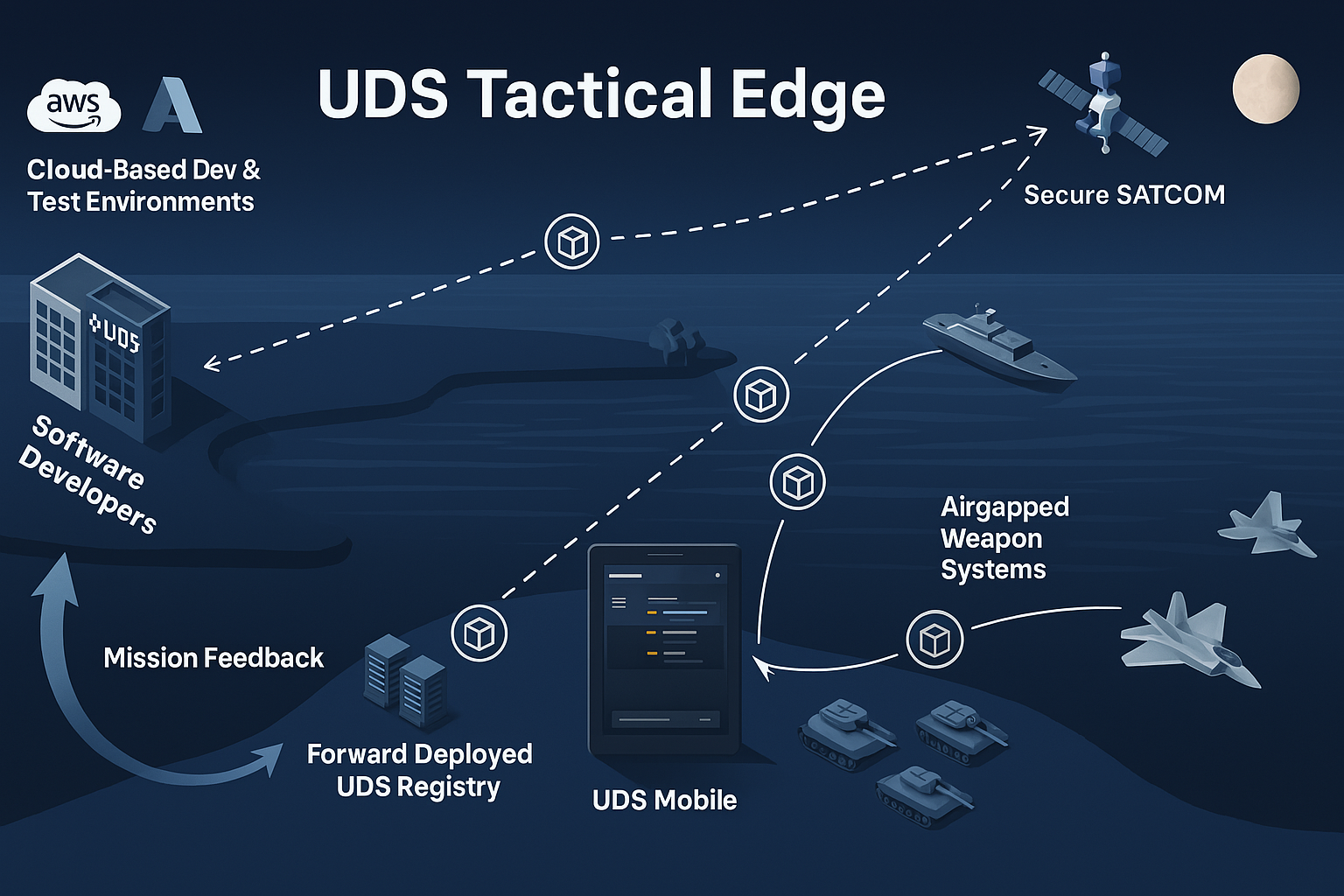

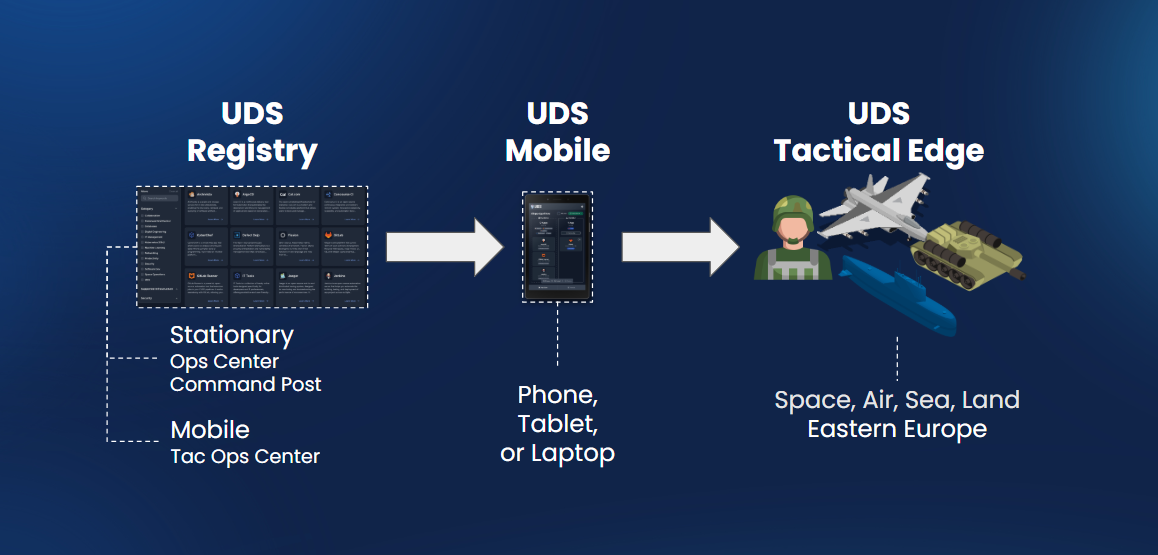

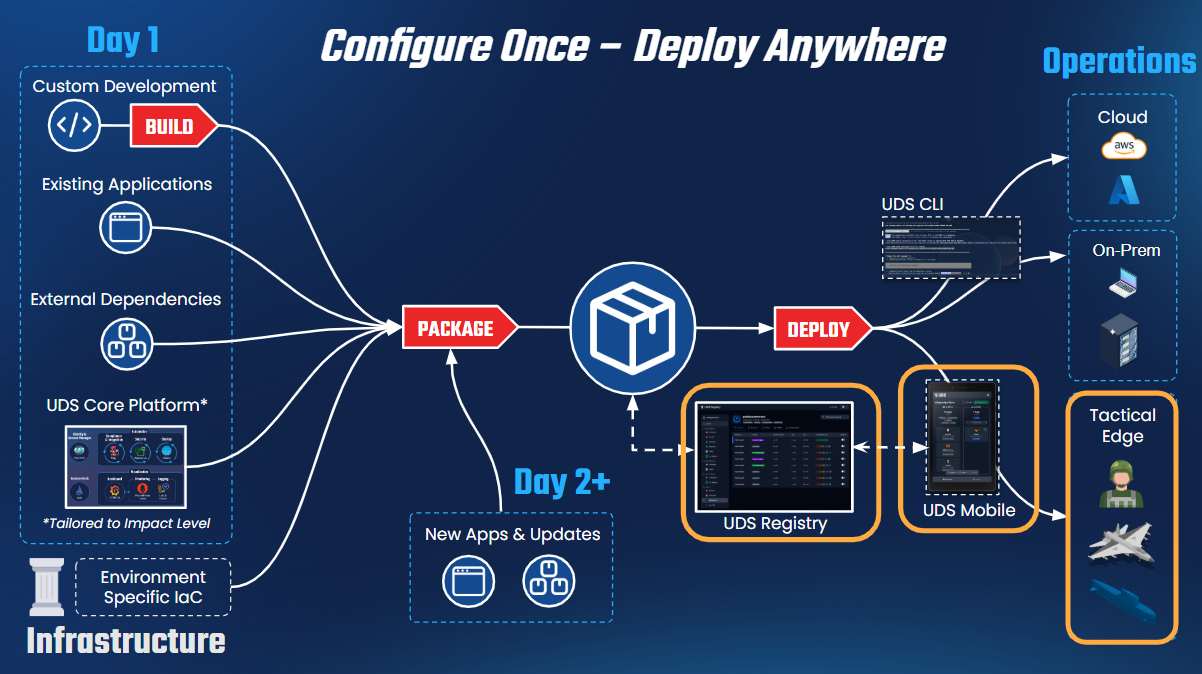

**[Pepr](https://pepr.dev/):** UDS policy engine and operator for enhanced security and compliance.| | **Identity and Access Management** | **[Keycloak](https://www.keycloak.org/):** A robust open-source Identity and Access Management solution, providing centralized authentication, authorization, and user management for enhanced security and control over access to mission-critical resources.| | **Backup and Restore** | **[Velero](https://velero.io/):** Provides backup and restore capabilities for Kubernetes clusters, ensuring data protection and disaster recovery.| | **Authorization** | **[AuthService](https://github.com/istio-ecosystem/authservice):** Offers centralized authorization services, managing access control and permissions within the Istio mesh. AuthService plays a supporting role to Keycloak as it handles part of the OIDC redirect flow.| ----- # Why UDS? UDS creates, supports, and maintains a secure runtime platform that simplifies software delivery and deployment for both application development teams and platform teams. UDS deploys mission applications into any environment while providing documentation and evidence to facilitate obtaining an Authority to Operate (ATO). By leveraging the power of open source projects like Zarf, Pepr, Lula, and more, UDS enables the creation of portable and compliant software artifacts. With UDS, mission teams can: - Deploy a new authorizable software environment swiftly and seamlessly. - Update mission application bundles and packages on-demand in minutes. - Deploy mission applications to classified and unclassified cloud, on-premises, and edge environments. - Use open and extensible architectures. - Avoid data and vendor lock-in. ## Security and Compliance UDS places a strong emphasis on security and compliance, enabling Mission Heroes to meet stringent requirements for obtaining an Authority to Operate (ATO). It binds compliance to specific software features, ensuring that controls required for authorization are met efficiently. By integrating security and compliance into the software development and deployment lifecycle, UDS helps your team proactively address potential vulnerabilities, reduce risk, and maintain a secure software ecosystem. ## Enhanced Portability and Cross-Platform Support UDS offers enhanced portability, allowing teams to deploy their software artifacts across diverse environments regardless of underlying infrastructure. Whether it's cloud-based, on-premises, or edge environments, UDS ensures that mission applications can be easily migrated and executed on different platforms. This seamless deployment across domains reduces the need for platform-specific modifications, accelerating the ATO process through the incorporation of compliance documentation directly into the software delivery pipeline. UDS's portability enables Mission Heroes to adapt quickly to changing infrastructure needs and expand their reach to various environments. ## Open Source and Avoiding Vendor Lock UDS is built on a foundation of open-source technologies, providing Mission Heroes with the freedom to access and modify the underlying code. By leveraging open-source projects like Zarf, Pepr, Keycloak, Istio, and more, UDS ensures that users can avoid vendor lock-in and maintain control over their data. This open approach allows Mission Heroes to customize and optimize their software delivery processes. ## Leverage UDS Core UDS Core offers a foundational suite of applications designed to establish a secure and efficient mission environment. It encompasses critical functionalities such as collaboration, monitoring, logging, security, compliance, and data protection. By utilizing these integrated applications, Mission Heroes can confidently deploy and operate source packages that adhere to rigorous security and performance standards. ----- # UDS Bundles A UDS Bundle is a collection of [UDS Packages](/structure/packages) designed to facilitate the delivery of software solutions for specific missions or software delivery processes. With UDS Bundles, teams can efficiently adapt to the unique requirements of each mission without sacrificing the reliability and security of the software delivery process. UDS Bundles enable: - A structured and repeatable approach for delivering software solutions tailored to diverse mission needs. Each bundle serves as a collection of capabilities, facilitating the delivery of software solutions for specific mission objectives. - Efficient adaptability to the unique requirements of each mission without compromising the reliability and security of the software delivery process. - Secure and consistent software delivery by bundling tools and configurations required for specific mission capabilities, ensuring a standardized and reusable approach. ## Benefits of UDS Bundles **Consistency:** UDS Bundles provide a standardized approach to software delivery, ensuring consistency across different missions and environments. **Reusability:** The modular nature of UDS Bundles allows for the reuse of capabilities, saving time and effort in software delivery. **Security and Compliance:** By incorporating controls and documented configurations, UDS Bundles promote secure and compliant software deployments. **Scalability:** UDS Bundles can be adapted and extended to accommodate different mission requirements and environments. ## Key Features ### Modularity and Reusability UDS Bundles are designed to be modular and reusable, allowing teams to combine different bundles as needed to meet the specific requirements of their missions or projects. By leveraging pre-defined capabilities and tools, UDS Bundles provide a standardized and consistent approach to software delivery. ### Composition of UDS Bundles Each UDS Bundle is composed of a set of capabilities, where each capability is achieved by selecting specific tools or functional components to perform the required functions. This composition ensures that essential functionalities and configurations are encapsulated within the bundle, making it easier to deploy and operate the software solutions. ### UDS Core Bundle The UDS Core Bundle holds a significant role within the UDS architecture. It serves as the foundational bundle that must be delivered before deploying any other optional bundles or mission capabilities. The UDS Core Bundle establishes the basic architecture and secure runtime environment needed for successful software delivery using UDS. ### Versatility of UDS Bundles UDS Bundles are versatile and can be shared and deployed across different environments, enabling consistent and reliable results in various scenarios. This adaptability makes UDS Bundles suitable for diverse mission needs and environments. ### Security and Compliance UDS Bundles include SBOMs for all included packages, including anything that [Zarf](https://docs.zarf.dev/ref/sboms/) has pulled and packaged. When using and integrating your bundle with UDS Core, you also benefit from compliance and security standards that are automatically handled for you during deployment, such as network policies and pod security policies. For additional details on the security protection provided by UDS see the [Security Overview section](/security/overview/). ----- # UDS Packages > UDS Package Reference A UDS Package is a [Zarf Package](https://docs.zarf.dev/ref/packages/) with two additions: 1. It is meant to be deployed on top of [UDS Core](/reference/uds-core/overview/). 2. It contains the [UDS Package Kubernetes custom resource](/reference/configuration/custom-resources/packages-v1alpha1-cr/). These packages include all the [OCI images](https://opencontainers.org/) (docker containers), [Helm charts](https://circleci.com/blog/what-is-helm/#:~:text=A%20Helm%20chart%20is%20a,up%20your%20application%20as%20needed.), and supplemental Kubernetes manifests required for the app to communicate with UDS Core. The UDS Operator in turn auto-applies appropriate security and network policies to assure a secure and compliant running environment. A UDS package _does not_ include dependencies like databases or object storage*. These external dependencies are deployed next to a UDS Package inside a [UDS Bundle](/structure/bundles/). To move from the theoretical to the concrete, see the next section on the anatomy of a UDS Package repo. ## Anatomy of a UDS Package Repo _Disclaimer: the exact file structure of UDS Packages is subject to change. This document will fall out of date but should retain conceptual accuracy. After understanding this point-in-time snapshot of how a UDS package is built, it should be fairly trivial to extend that knowledge to grasp UDS packages as improved in the interim. To aid in it's utility as a teaching tool, links to source code are pinned to a specific GitLab Package release._ For an in-depth developer-focused treatment of UDS Packages, see [the documentation in GitHub](https://github.com/defenseunicorns/uds-common/blob/main/docs/uds-packages/guide.md). You can also view the UDS package template [in GitHub here](https://github.com/uds-packages/template/tree/main). This document will go over the main components of a UDS package and their functions at an overview level, and then show specifically how these components are tied together in the case of GitLab. ### Anatomy Overview | Directory / Top-level file | Role | Function | | :--- | :------------------------- | :------- | | `.github/` | CI/CD | Directives to GitHub, primarily it contains the build, test, and release pipeline(s). | | `adr/` | Docs | "ADR" stands for Architectural Decision Records. These documents record key architectural decisions and their reasoning. | | `bundle/` | Testing & Development | When you're testing a UDS Package, you need to be able to deploy it with other applications such as databases in order to test your configuration. The `bundle/` directories in UDS Package repos are for just that. They deploy light-weight databases, key-value stores, and object stores as needed alongside the application to permit testing. They also serve as an example of how to use the UDS Package in a bundle. Nothing in the `bundle/` directory ever becomes part of the UDS Application Package. | | `charts/` | UDS Package Component | This is for helm charts which are created supplementally to the application's helm chart. This includes at minimum the UDS Package manifest and the SAML/OIDC configuration for automatic integration with our Keycloak SSO application (which is part of UDS Core). Not infrequently it will also include another resource or two as needed to fully integrate into the UDS ecosystem on an app-specific basis. | `common/` | UDS Package Component | This directory holds a single `zarf.yaml` file which is the base Zarf package definition. It is imported by the root-level `zarf.yaml`. You can think of it like the parent-class object in an object-oriented-programming model. This generally pulls the charts from the `charts/` directory and the main application's helm chart into the Zarf package but leaves [flavor](/overview/acronyms-and-terms/#flavor-as-in-uds-package-or-bundle-flavor) specific details out. | | `docs/` | Docs | Documentation about the UDS Package. | | `src/` | Testing & Development | This contains additional zarf package source code in each leaf-directory. These are never made into a part of the UDS Package. Rather, they are included in the test bundle to help glue the application to the larger ecosystem. This often includes a zarf package that contains only the application's namespace resource. By putting this in a separate zarf package and deploying it ahead of time, secrets deployed to the application's namespace by other packages (such as authentication secrets) do not get deleted when you run `zarf package remove

uds.

## uds

CLI for UDS Bundles

```

uds COMMAND [flags]

```

### Options

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

-h, --help help for uds

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds completion](/reference/cli/commands/uds_completion/) - Generate the autocompletion script for the specified shell

* [uds create](/reference/cli/commands/uds_create/) - Create a bundle from a given directory or the current directory

* [uds deploy](/reference/cli/commands/uds_deploy/) - Deploy a bundle from a local tarball or oci:// URL

* [uds dev](/reference/cli/commands/uds_dev/) - [beta] Commands useful for developing bundles

* [uds inspect](/reference/cli/commands/uds_inspect/) - Display the metadata of a bundle

* [uds list](/reference/cli/commands/uds_list/) - [alpha] List deployed bundles in the cluster

* [uds logs](/reference/cli/commands/uds_logs/) - View most recent UDS CLI logs

* [uds monitor](/reference/cli/commands/uds_monitor/) - Monitor a UDS Cluster

* [uds publish](/reference/cli/commands/uds_publish/) - Publish a bundle from the local file system to a remote registry

* [uds pull](/reference/cli/commands/uds_pull/) - Pull a bundle from a remote registry and save to the local file system

* [uds remove](/reference/cli/commands/uds_remove/) - Remove a bundle that has been deployed already

* [uds run](/reference/cli/commands/uds_run/) - Run a task using maru-runner

* [uds version](/reference/cli/commands/uds_version/) - Shows the version of the running UDS-CLI binary

-----

# uds completion

> UDS CLI command reference for uds completion.

## uds completion

Generate the autocompletion script for the specified shell

### Synopsis

Generate the autocompletion script for uds for the specified shell.

See each sub-command's help for details on how to use the generated script.

### Options

```

-h, --help help for completion

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

* [uds completion bash](/reference/cli/commands/uds_completion_bash/) - Generate the autocompletion script for bash

* [uds completion fish](/reference/cli/commands/uds_completion_fish/) - Generate the autocompletion script for fish

* [uds completion zsh](/reference/cli/commands/uds_completion_zsh/) - Generate the autocompletion script for zsh

-----

# uds completion bash

> UDS CLI command reference for uds completion bash.

## uds completion bash

Generate the autocompletion script for bash

### Synopsis

Generate the autocompletion script for the bash shell.

This script depends on the 'bash-completion' package.

If it is not installed already, you can install it via your OS's package manager.

To load completions in your current shell session:

source <(uds completion bash)

To load completions for every new session, execute once:

#### Linux:

uds completion bash > /etc/bash_completion.d/uds

#### macOS:

uds completion bash > $(brew --prefix)/etc/bash_completion.d/uds

You will need to start a new shell for this setup to take effect.

```

uds completion bash

```

### Options

```

-h, --help help for bash

--no-descriptions disable completion descriptions

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds completion](/reference/cli/commands/uds_completion/) - Generate the autocompletion script for the specified shell

-----

# uds completion fish

> UDS CLI command reference for uds completion fish.

## uds completion fish

Generate the autocompletion script for fish

### Synopsis

Generate the autocompletion script for the fish shell.

To load completions in your current shell session:

uds completion fish | source

To load completions for every new session, execute once:

uds completion fish > ~/.config/fish/completions/uds.fish

You will need to start a new shell for this setup to take effect.

```

uds completion fish [flags]

```

### Options

```

-h, --help help for fish

--no-descriptions disable completion descriptions

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds completion](/reference/cli/commands/uds_completion/) - Generate the autocompletion script for the specified shell

-----

# uds completion zsh

> UDS CLI command reference for uds completion zsh.

## uds completion zsh

Generate the autocompletion script for zsh

### Synopsis

Generate the autocompletion script for the zsh shell.

If shell completion is not already enabled in your environment you will need

to enable it. You can execute the following once:

echo "autoload -U compinit; compinit" >> ~/.zshrc

To load completions in your current shell session:

source <(uds completion zsh)

To load completions for every new session, execute once:

#### Linux:

uds completion zsh > "${fpath[1]}/_uds"

#### macOS:

uds completion zsh > $(brew --prefix)/share/zsh/site-functions/_uds

You will need to start a new shell for this setup to take effect.

```

uds completion zsh [flags]

```

### Options

```

-h, --help help for zsh

--no-descriptions disable completion descriptions

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds completion](/reference/cli/commands/uds_completion/) - Generate the autocompletion script for the specified shell

-----

# uds create

> UDS CLI command reference for uds create.

## uds create

Create a bundle from a given directory or the current directory

```

uds create [DIRECTORY] [flags]

```

### Options

```

-c, --confirm Confirm bundle creation without prompting

-h, --help help for create

-n, --name string Specify the name of the bundle

-o, --output string Specify the output directory or oci:// URL for the created bundle

-k, --signing-key string Path to private key file for signing bundles

-p, --signing-key-password string Password to the private key file used for signing bundles

-v, --version string Specify the version of the bundle

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds deploy

> UDS CLI command reference for uds deploy.

## uds deploy

Deploy a bundle from a local tarball or oci:// URL

```

uds deploy [BUNDLE_TARBALL|OCI_REF] [flags]

```

### Options

```

-c, --confirm Confirms bundle deployment without prompting. ONLY use with bundles you trust

-h, --help help for deploy

-p, --packages stringArray Specify which zarf packages you would like to deploy from the bundle. By default all zarf packages in the bundle are deployed.

-r, --resume Only deploys packages from the bundle which haven't already been deployed

--retries int Specify the number of retries for package deployments (applies to all pkgs in a bundle) (default 3)

--set stringToString Specify deployment variables to set on the command line (KEY=value) (default [])

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds dev

> UDS CLI command reference for uds dev.

## uds dev

[beta] Commands useful for developing bundles

### Options

```

-h, --help help for dev

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

* [uds dev deploy](/reference/cli/commands/uds_dev_deploy/) - [beta] Creates and deploys a UDS bundle in dev mode

-----

# uds dev deploy

> UDS CLI command reference for uds dev deploy.

## uds dev deploy

[beta] Creates and deploys a UDS bundle in dev mode

### Synopsis

[beta] Creates and deploys a UDS bundle from a given directory or OCI repository in dev mode, setting package options like YOLO mode for faster iteration.

```

uds dev deploy [BUNDLE_DIR|OCI_REF] [flags]

```

### Options

```

-f, --flavor string [beta] Specify which zarf package flavor you want to use.

--force-create [beta] For local bundles with local packages, specify whether to create a zarf package even if it already exists.

-h, --help help for deploy

-p, --packages stringArray Specify which zarf packages you would like to deploy from the bundle. By default all zarf packages in the bundle are deployed.

-r, --ref stringToString Specify which zarf package ref you want to deploy. By default the ref set in the bundle yaml is used. (default [])

--set stringToString Specify deployment variables to set on the command line (KEY=value) (default [])

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds dev](/reference/cli/commands/uds_dev/) - [beta] Commands useful for developing bundles

-----

# uds inspect

> UDS CLI command reference for uds inspect.

## uds inspect

Display the metadata of a bundle

```

uds inspect [BUNDLE_TARBALL|OCI_REF|BUNDLE_YAML_FILE] [flags]

```

### Options

```

-e, --extract Create a folder of SBOMs contained in the bundle

-h, --help help for inspect

-k, --key string Path to a public key file that will be used to validate a signed bundle

-i, --list-images Derive images from a uds-bundle.yaml file and list them

-v, --list-variables List all configurable variables in a bundle (including zarf variables)

-s, --sbom Create a tarball of SBOMs contained in the bundle

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds list

> UDS CLI command reference for uds list.

## uds list

[alpha] List deployed bundles in the cluster

```

uds list [flags]

```

### Options

```

-h, --help help for list

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds logs

> UDS CLI command reference for uds logs.

## uds logs

View most recent UDS CLI logs

```

uds logs [flags]

```

### Options

```

-h, --help help for logs

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds monitor

> UDS CLI command reference for uds monitor.

## uds monitor

Monitor a UDS Cluster

### Synopsis

Tools for monitoring a UDS Cluster and connecting to the UDS Engine for advanced troubleshooting

### Options

```

-h, --help help for monitor

-n, --namespace string Limit monitoring to a specific namespace

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

* [uds monitor pepr](/reference/cli/commands/uds_monitor_pepr/) - Observe Pepr operations in a UDS Cluster

-----

# uds monitor pepr

> UDS CLI command reference for uds monitor pepr.

## uds monitor pepr

Observe Pepr operations in a UDS Cluster

### Synopsis

View UDS Policy enforcements, UDS Operator events and additional Pepr operations

```

uds monitor pepr [policies | operator | allowed | denied | failed | mutated] [flags]

```

### Examples

```

# Aggregates all admission and operator logs into a single stream

uds monitor pepr

# Stream UDS Operator actions (Package processing, status updates, and errors)

uds monitor pepr operator

# Stream UDS Policy logs (Allow, Deny, Mutate)

uds monitor pepr policies

# Stream UDS Policy allow logs

uds monitor pepr allowed

# Stream UDS Policy deny logs

uds monitor pepr denied

# Stream UDS Policy mutation logs

uds monitor pepr mutated

# Stream UDS Policy deny logs and UDS Operator error logs

uds monitor pepr failed

```

### Options

```

-f, --follow Continuously stream Pepr logs

-h, --help help for pepr

--json Return the raw JSON output of the logs

--since duration Only return logs newer than a relative duration like 5s, 2m, or 3h. Defaults to all logs.

-t, --timestamps Show timestamps in Pepr logs

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

-n, --namespace string Limit monitoring to a specific namespace

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds monitor](/reference/cli/commands/uds_monitor/) - Monitor a UDS Cluster

-----

# uds publish

> UDS CLI command reference for uds publish.

## uds publish

Publish a bundle from the local file system to a remote registry

```

uds publish [BUNDLE_TARBALL] [OCI_REF] [flags]

```

### Options

```

-h, --help help for publish

-v, --version string [Deprecated] Specify the version of the bundle to be published. This flag will be removed in a future version. Users should use the --version flag during creation to override the version defined in uds-bundle.yaml

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds pull

> UDS CLI command reference for uds pull.

## uds pull

Pull a bundle from a remote registry and save to the local file system

```

uds pull [OCI_REF] [flags]

```

### Options

```

-h, --help help for pull

-k, --key string Path to a public key file that will be used to validate a signed bundle

-o, --output string Specify the output directory for the pulled bundle

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds remove

> UDS CLI command reference for uds remove.

## uds remove

Remove a bundle that has been deployed already

```

uds remove [BUNDLE_TARBALL|OCI_REF] [flags]

```

### Options

```

-c, --confirm REQUIRED. Confirm the removal action to prevent accidental deletions

-h, --help help for remove

-p, --packages stringArray Specify which zarf packages you would like to remove from the bundle. By default all zarf packages in the bundle are removed.

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds run

> UDS CLI command reference for uds run.

## uds run

Run a task using maru-runner

```

uds run [flags]

```

### Options

```

-h, --help help for run

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# uds version

> UDS CLI command reference for uds version.

## uds version

Shows the version of the running UDS-CLI binary

### Synopsis

Displays the version of the UDS-CLI release that the current binary was built from.

```

uds version [flags]

```

### Options

```

-h, --help help for version

```

### Options inherited from parent commands

```

-a, --architecture string Architecture for UDS bundles and Zarf packages

--insecure Allow access to insecure registries and disable other recommended security enforcements such as package checksum and signature validation. This flag should only be used if you have a specific reason and accept the reduced security posture.

-l, --log-level string Log level when running UDS-CLI. Valid options are: warn, info, debug, trace (default "info")

--no-color Disable color output

--no-log-file Disable log file creation

--no-progress Disable fancy UI progress bars, spinners, logos, etc

--oci-concurrency int Number of concurrent layer operations to perform when interacting with a remote bundle. (default 3)

--skip-signature-validation Skip signature validation for packages

--tmpdir string Specify the temporary directory to use for intermediate files

--uds-cache string Specify the location of the UDS cache directory (default "~/.uds-cache")

```

### SEE ALSO

* [uds](/reference/cli/commands/uds/) - CLI for UDS Bundles

-----

# Overview

The [UDS CLI](https://github.com/defenseunicorns/uds-cli) is the primary interface for users to interact with various components within the UDS landscape. It streamlines the deployment process of mission applications and secure infrastructure, simplifying tasks involved in running mission applications while maintaining regulatory compliance in a unified and efficient manner.

The UDS CLI simplifies deployment by bundling multiple Zarf Packages into a single deployable artifact. This process ensures that UDS Bundles, which encompass infrastructure, platform, and mission applications, can be efficiently deployed within any Mission Hero's system environment. Additionally, the UDS CLI extends its capabilities to Pepr, where multiple Pepr applications are bundled and deployed as a single Pepr Module to support UDS Bundles during runtime.

-----

# Quickstart and Usage

## Install

Recommended installation method is with Brew:

```bash

brew tap defenseunicorns/tap && brew install uds

```

UDS CLI Binaries are also included with each [Github Release](https://github.com/defenseunicorns/uds-cli/releases)

## Contributing

Build instructions and contributing docs are located in [CONTRIBUTING.md](https://github.com/defenseunicorns/uds-cli/blob/main/CONTRIBUTING.md).

## Quickstart

The UDS-CLI's flagship feature is deploying multiple, independent Zarf packages. To create a `UDSBundle` of Zarf packages, create a `uds-bundle.yaml` file like so:

```yaml

kind: UDSBundle

metadata:

name: example

description: an example UDS bundle

version: 0.0.1

packages:

- name: init

repository: ghcr.io/defenseunicorns/packages/init

ref: v0.33.0

optionalComponents:

- git-server

- name: podinfo

repository: ghcr.io/defenseunicorns/uds-cli/podinfo

ref: 0.0.1

```

The above `UDSBundle` deploys the Zarf init package and podinfo.

The packages referenced in `packages` can exist either locally or in an OCI registry. See [here](https://github.com/defenseunicorns/uds-cli/tree/main/src/test/bundles/03-local-and-remote) for an example that deploys both local and remote Zarf packages. More `UDSBundle` examples can be found in the [src/test/bundles](https://github.com/defenseunicorns/uds-cli/tree/main/src/test/bundles) folder.

### Declarative Syntax

The syntax of a `uds-bundle.yaml` is entirely declarative. As a result, the UDS CLI will not prompt users to deploy optional components in a Zarf package. If you want to deploy an optional Zarf component, it must be specified in the `optionalComponents` key of a particular `package`.

### First-class UDS Support

When running `deploy`,`inspect`,`remove`, and `pull` commands, UDS CLI contains shorthand for interacting with the Defense Unicorns org on GHCR. Specifically, unless otherwise specified, paths will automatically be expanded to the Defense Unicorns org on GHCR. For example:

- `uds deploy unicorn-bundle:v0.1.0` is equivalent to `uds deploy ghcr.io/defenseunicorns/packages/uds/bundles/unicorn-bundle:v0.1.0`

The bundle matching and expansion is ordered as follows:

1. Local with a `tar.zst` extension

2. Remote path: `oci://ghcr.io/defenseunicorns/packages/uds/bundles/**Container Registry:** It provides a centralized location for storing and managing container images, facilitating seamless deployment and version control.

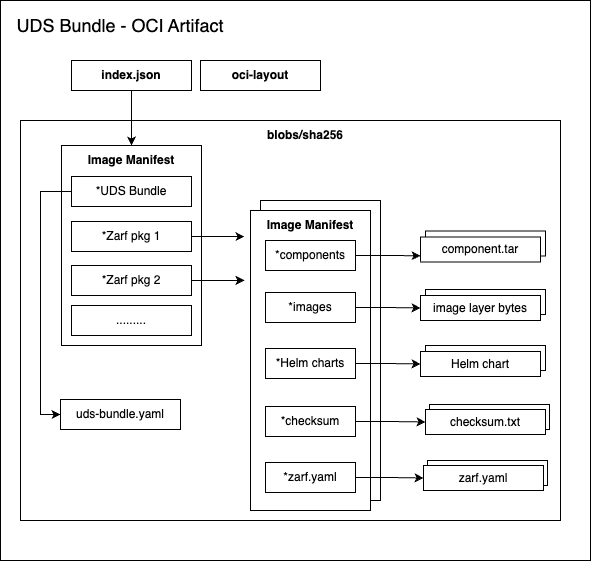

**Secret Storage:** Securely manage and access sensitive data like credentials and configurations. | | **GitLab Runner** | **Continuous Integration:** GitLab Runner is a Continuous Integration runner that integrates with GitLab, facilitating automated builds, testing, and deployment of your applications. | | **Mattermost** | **Online Chat Service:** Mattermost is an open-source, self-hostable online chat service, providing a platform for real-time communication and collaboration within teams. | | **SonarQube** | **Code Quality:** SonarQube continuously evaluates code quality and identifies issues, helping maintain code integrity and reduce technical debt. | :::note If you are interested in learning more about Software Factory or would like to receive a demo, please [contact us](https://www.defenseunicorns.com/contactus)! ::: ----- # Software Factory Bundles :::note The following UDS Bundles are designed specifically for development and testing environments and are *not intended for production use*. ::: ## [swf-dev](https://github.com/defenseunicorns/uds-software-factory/tree/main/bundles/dev) **Bundle Overview** This bundle is primarily for development purposes and requires an existing K3d cluster to deploy. **System Requirements** This bundle requires `9 CPUs and 28GB of memory` available to run effectively. **Bundle Applications** | Application | Description | | ----------------- | ----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | | Minio | In-cluster S3 Object Storage solution. | | Postgres Operator | In-cluster PostgreSQL Database management tool. | | GitLab | A comprehensive DevOps software package facilitating software development, security, and operational tasks. | | GitLab Runner | A Continuous Integration (CI) runner tightly integrated with GitLab, streamlining automation of build, test, and deployment workflows. | | Mattermost | An open-source, self-hostable online chat service empowering real-time communication for teams and organizations. | | SonarQube | An open-source platform developed by SonarSource, dedicated to the continuous inspection of code quality, ensuring adherence to high standards across the software development lifecycle. | ## [k3d-swf-demo](https://github.com/defenseunicorns/uds-software-factory/tree/main/bundles/k3d-demo) **Bundle Overview** Demo bundle of Software Factory deployed on top of [UDS Core](https://github.com/defenseunicorns/uds-core) that includes the deployment of an underlying K3d cluster. **System Requirements** - This bundle requires a minimum of `11 CPUs and 32GB of memory` available to run effectively. - This bundle is best deployed on an adequately sized Linux machine with Docker or equivalent installed. **Bundle Applications** | Application | Description | | ----------------- | ----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- | | UDS-K3d | Containerized K3s with opinionated deployment for UDS development. | | Minio | In-cluster S3 Object Storage solution. | | Postgres Operator | In-cluster PostgreSQL Database management tool. | | UDS Core | Comprehensive suite including Service Mesh, IdAM, Monitoring, Logging, Metrics, UDS Policy Engine and Operator, Container Security, Backup and Restore functionalities. | | GitLab | A comprehensive DevOps software package facilitating software development, security, and operational tasks. | | GitLab Runner | A Continuous Integration (CI) runner tightly integrated with GitLab, streamlining automation of build, test, and deployment workflows. | | Mattermost | An open-source, self-hostable online chat service empowering real-time communication for teams and organizations. | | SonarQube | An open-source platform developed by SonarSource, dedicated to the continuous inspection of code quality, ensuring adherence to high standards across the software development lifecycle. | ----- # Your App Your Environment Your App Your Environment revolutionizes application deployment for Mission Heroes, providing a seamless process for selecting, deploying, and managing mission-critical software on a Kubernetes cluster. Leveraging UDS and an array of open-source projects, Your App Your Environment is engineered to handle complex challenges, including egress-limited or air-gapped software delivery, ensuring robust and efficient deployment solutions. Offering flexibility, Your App Your Environment delivers cloud-native applications tailored to the unique needs of mission teams, empowering them to succeed in any environment, whether in the cloud, on-premises, or at the tactical edge. By integrating with Defense Unicorns' recommended DevSecOps Reference Guide compliant architecture, Your App Your Environment ensures compliance and security by default, providing a secure baseline with documented NIST 800-53 controls. Your App Your Environment meets 70% of technical security controls out of the box, significantly accelerating application delivery timelines towards achieving Authority to Operate (ATO). With Your App Your Environment, teams maintain ownership and independence over their applications, avoiding reliance on vendor-locked solutions, and can package and deploy applications across various environments, guaranteeing compatibility and adaptability to diverse operational scenarios. ## Key Features **Accelerate Authorization:** Leverage Defense Unicorns' recommended DevSecOps Reference Guide compliant architecture to integrate your app with a secure baseline that comes with documented NIST 800-53 controls. **Mission Ownership:** Your App Your Environment enables teams to maintain ownership of their application, preventing reliance on vendor-locked solutions. **Deploy Anywhere:** Your App Your Environment packages and delivers your mission application across multiple environments, including cloud, on-premises, and tactical edge. :::note If you are interested in learning more about Your App Your Environment, please [contact us](https://www.defenseunicorns.com/contactus)! ::: ----- # Bundle Overrides Bundle overrides provide a mechanism to customize Helm charts inside of Zarf packages. ## Quickstart Consider the following `zarf.yaml` and `values.yaml` which deploys [podinfo](https://github.com/stefanprodan/podinfo) with a couple of custom values: ```yaml # zarf.yaml kind: ZarfPackageConfig metadata: name: helm-overrides-package version: 0.0.1 components: - name: helm-overrides-component required: true charts: - name: podinfo version: 6.4.0 namespace: podinfo url: https://github.com/stefanprodan/podinfo.git gitPath: charts/podinfo valuesFiles: - values.yaml images: - ghcr.io/stefanprodan/podinfo:6.4.0 --- # values.yaml replicaCount: 1 ui: color: blue ``` The bundle overrides feature allows users to override the values specified in Zarf packages. For example: ```yaml kind: UDSBundle metadata: name: helm-overrides description: testing a bundle with Helm overrides version: 0.0.1 packages: - name: helm-overrides-package path: "path/to/pkg" ref: 0.0.1 overrides: helm-overrides-component: podinfo: valuesFiles: - values.yaml values: - path: "replicaCount" value: 2 variables: - name: UI_COLOR path: "ui.color" description: "Set the color for podinfo's UI" default: "purple" ``` ```yaml #values.yaml podAnnotations: customAnnotation: "customValue" ``` This bundle will deploy the `helm-overrides-package` Zarf package and override the `replicaCount`, `ui.color`, and `podAnnotations` values in the `podinfo` chart. The `values` can't be modified after the bundle has been created. However, at deploy time, users can override the `UI_COLOR` and other `variables` using a environment variable called `UDS_UI_COLOR` or by specifying it in a `uds-config.yaml` like so: ```yaml variables: helm-overrides-package: UI_COLOR: green ``` ## Overrides ### Syntax Consider the following bundle `overrides`: ```yaml packages: - name: helm-overrides-package path: "path/to/pkg" ref: 0.0.1 overrides: helm-overrides-component: # component name inside of the helm-overrides-package Zarf pkg podinfo: # chart name from the helm-overrides-component component valuesFiles: - values.yaml values: - path: "replicaCount" value: 2 variables: - name: UI_COLOR path: "ui.color" description: "Set the color for podinfo's UI" default: "purple" ``` ```yaml #values.yaml podAnnotations: customAnnotation: "customValue" ``` In this example, the `helm-overrides-package` Zarf package has a component called `helm-overrides-component` which contains a Helm chart called `podinfo`; note how these names are keys in the `overrides` block. The `podinfo` chart has a `replicaCount` value that is overridden to `2`, a `podAnnotations` value that is overridden to include `customAnnotation: "customValue"` and a variable called `UI_COLOR` that is overridden to `purple`. ### Values Files The `valuesFiles` in an `overrides` block are a list of `file`'s. It allows users to override multiple values in a Zarf package component's underlying Helm chart, by providing a file with those values instead of having to include them all individually in the `overrides` block. ### Values The `values` in an `overrides` block are a list of `path` and `value` pairs. They allow users to override values in a Zarf package component's underlying Helm chart. Note that values are specified by bundle authors and **cannot be modified** after the bundle has been created. #### Path The `path` uses dot notation to specify the location of a value to override in the underlying Helm chart. For example, the `replicaCount` path in the `podinfo` chart is located at the top-level of the [podinfo values.yaml](https://github.com/stefanprodan/podinfo/blob/master/charts/podinfo/values.yaml), so the path is simply `replicaCount`, while the `ui.color` path is located under the `ui` key, so the path is `ui.color`. #### Value The `value` is the value to set at the `path`. Values can be simple values such as numbers and strings, as well as, complex lists and objects, for example: ```yaml --- overrides: helm-overrides-component: podinfo: values: - path: "podinfo.tolerations" value: - key: "unicorn" operator: "Equal" value: "defense" effect: "NoSchedule" - path: podinfo.podAnnotations value: customAnnotation: "customValue" ``` #### Bundle Variables as Values Bundle and Zarf variables can be used to set override values by using the syntax `${...}`. For example: ```yaml # uds-config.yaml variables: helm-overrides-package: replica_count: 2 ``` ```yaml kind: UDSBundle metadata: name: example-bundle description: Example for using an imported variable to set an overrides value version: 0.0.1 packages: - name: output-var repository: localhost:888/output-var ref: 0.0.1 exports: - name: COLOR - name: helm-overrides-package path: "../../packages/helm" ref: 0.0.1 overrides: podinfo-component: unicorn-podinfo: values: - path: "podinfo.replicaCount" value: ${REPLICA_COUNT} - path: "podinfo.ui.color" value: ${COLOR} ``` In the example above `${REPLICA_COUNT}` is set in the `uds-config.yaml` file and `${COLOR}` is set as an export from the `output-var` package. Note that you could also set these values with the `shared` key in a `uds-config.yaml`, environment variables prefixed with `UDS_` or with the `--set` flag during deployment. #### Value Precedence Value precedence is as follows: 1. The `values` in an `overrides` block 1. `values` set in the last `valuesFile` (if more than one specified) 1. `values` set in the previous `valuesFile` (if more than one specified) ### Variables Variables are similar to [values](#values) in that they allow users to override values in a Zarf package component's underlying Helm chart; they also share a similar syntax. However, unlike `values`, `variables` can be overridden at deploy time. For example, consider the `variables` key in the following `uds-bundle.yaml`: ```yaml kind: UDSBundle metadata: name: example-bundle version: 0.0.1 packages: - name: helm-overrides-package path: "../../packages/helm" ref: 0.0.1" overrides: podinfo-component: unicorn-podinfo: variables: - name: UI_COLOR path: "ui.color" description: "Set the color for podinfo's UI" default: "purple" ``` There are 3 ways to override the `UI_COLOR` variable: 1. **UDS config**: you can create a `uds-config.yaml` file in the same directory as the bundle and specify the variable to override. For example, to override the `UI_COLOR` variable, you can create a `uds-config.yaml`: ```yaml variables: helm-overrides-package: ui_color: green # Note that the variable for `UI_COLOR` can be upper or lowercase ``` 1. **Environment variables**: you can create an environment variable prefixed with `UDS_` and the name of the variable. For example, to override the `UI_COLOR` variable, you can create an environment variable called `UDS_UI_COLOR` and set it to the desired value. Note that environment variables take precedence over `uds-config.yaml` variables. 1. **--set Flag**: you can also override the variable using the CLI's `--set` flag. For example, to override the `UI_COLOR` variable, you can run one of the following commands: ```bash # by default ui_color will apply to all packages in the bundle uds deploy example-bundle --set ui_color=green # to specify a specific package that the variable should apply to you can prepend the package name to the variable uds deploy example-bundle --set helm-overrides-package.ui_color=green ``` :::caution Because Helm override variables and Zarf variables share the same --set syntax, be careful with variable names to avoid conflicts. ::: :::note A variable that is not overridden by any of the methods above and has no default will be ignored. ::: #### Variable Precedence Variable precedence is as follows: 1. The `--set` flag 1. Environment variables 1. `uds-config.yaml` variables 1. Variables `default` in the`uds-bundle.yaml` #### Variable Types Variables can be of either type `raw` or `file`. The type will default to raw if not set explicitly. :::caution If a variable is set to accept a file as its value, but is missing the `file` type, then the file will not be processed. ::: ```yaml kind: UDSBundle metadata: name: example-bundle version: 0.0.1 packages: - name: helm-overrides-package path: "../../packages/helm" ref: 0.0.1 overrides: podinfo-component: unicorn-podinfo: variables: - name: UI_COLOR path: "ui.color" description: "variable UI_COLOR accepts a raw value (e.g. a string, int, map) like "purple", which is passed to the ui.color helm path" type: raw - name: test_secret path: "testSecret" description: "variable TEST_SECRET will resolve to the contents of a file (e.g. test.cert), which gets passed to the testSecret helm path" type: file ``` **File Paths** If a file path is not absolute, it will be set as relative to the `uds-config.yaml` directory. e.g. the following `uds-config.yaml` is in [`src/test/bundles/07-helm-overrides/variable-files/`](https://github.com/defenseunicorns/uds-cli/blob/main/src/test/bundles/07-helm-overrides/uds-config.yaml) ```yaml variables: helm-overrides: test_secret: test.cert ``` This means when `test.cert` is evaluated it will first be appended to the config path like so `src/test/bundles/07-helm-overrides/variable-files/test.cert`. If the file path is already set to the same relative path as the config, then no merging will take place. :::note UDS CLI does not encrypt or base64 encode any file contents before passing said data to Zarf or Helm. For example, if the file contains a key to be used in a Kubernetes secret, it must be base64 encoded before being ingested by UDS CLI. ::: ### Sensitive Variables can be specified as sensitive, which means their values, regardless of how they're set, will be masked in output. ```yaml kind: UDSBundle metadata: name: example-bundle version: 0.0.1 packages: - name: helm-overrides-package path: "../../packages/helm" ref: 0.0.1 overrides: podinfo-component: unicorn-podinfo: variables: - name: SECRET_VAL path: "testSecret" description: "should be masked in output" sensitive: true ``` ### Namespace It's also possible to specify a namespace for a packaged Helm chart to be installed in. For example, to deploy the a chart in the `custom-podinfo` namespace, you can specify the `namespace` in the `overrides` block: ```yaml kind: UDSBundle metadata: name: example-bundle version: 0.0.1 packages: - name: helm-overrides-package path: "../../packages/helm" ref: 0.0.1 overrides: podinfo-component: unicorn-podinfo: namespace: custom-podinfo # custom namespace! values: - path: "podinfo.replicaCount" value: 1 ``` ### View All Variables When working with a local or remote bundle you can view all overrides and zarf variables by running `uds inspect --list-variables BUNDLE_TARBALL|OCI_REF]` ----- # Overview ## Bundle Anatomy A UDS Bundle is an OCI artifact with the following form:  ----- # Velero Cloud Provider Snapshot Support This document describes how to enable volume snapshot support in Velero for cloud-provider-specific infrastructure in `uds-core`. This allows backup and restoration of persistent volumes via native snapshot mechanisms provided by each cloud provider. Velero can create snapshots of Persistent Volumes using the underlying cloud provider's native storage features: ## AWS (EBS) Snapshot Support ### Enable Snapshots in UDS-Core To enable snapshotting of EBS volumes by Velero, add the following Helm overrides to your UDS bundle: ```yaml velero: velero: values: - path: snapshotsEnabled value: true - path: schedules.udsbackup.template.snapshotVolumes value: true ``` These values ensure that volume snapshots are included in the default `udsbackup` schedule. ### IAM Permissions for EBS Snapshotting The Velero service account must have an IRSA role with the necessary permissions to manage EBS snapshots. Add the following IAM policy statements to your Velero IRSA role definition: ```hcl # Example IAM policy for Velero AWS plugin # velero aws plugin policy scope from here: https://github.com/vmware-tanzu/velero-plugin-for-aws?tab=readme-ov-file#set-permissions-for-velero # ref policy for scoping based on tags: https://cloudonaut.io/restricting-access-to-ec2-instances-based-on-tags/ data "aws_iam_policy_document" "velero_policy" { statement { effect = "Allow" actions = [ "kms:ReEncryptFrom", "kms:ReEncryptTo" ] # Replace

## Metadata

| Field | Type | Description |

|---|---|---|

| name | string (enum):

|

## Spec

| Field | Type | Description |

|---|---|---|

| attributes | Attributes | |

| networking | Networking | |

| caBundle | CaBundle | |

| expose | Expose | |

| policy | Policy |

### Attributes

| Field | Type | Description |

|---|---|---|

| clusterName | string | Friendly name to associate with your UDS cluster |

| tags | string[] | Tags to apply to your UDS cluster |

### Networking

| Field | Type | Description |

|---|---|---|

| kubeApiCIDR | string | CIDR range for your Kubernetes control plane nodes. This is a manual override that can be used instead of relying on Pepr to automatically watch and update the values |

| kubeNodeCIDRs | string[] | CIDR(s) for all Kubernetes nodes (not just control plane). Similar reason to above,annual override instead of relying on watch |

### CaBundle

| Field | Type | Description |

|---|---|---|

| certs | string | Contents of user provided CA bundle certificates |

| includeDoDCerts | boolean | Include DoD CA certificates in the bundle |

| includePublicCerts | boolean | Include public CA certificates in the bundle |

### Expose

| Field | Type | Description |

|---|---|---|

| domain | string | Domain all cluster services will be exposed on |

| adminDomain | string | Domain all cluster services on the admin gateway will be exposed on |

| caCert | string | The trusted CA that signed your domain certificates if using Private PKI |

### Policy

-----

# Exemptions CR (v1alpha1)

| Field | Type | Description |

|---|---|---|

| allowAllNsExemptions | boolean | Allow UDS Exemption custom resources to live in any namespace (default false) |

# Exemptions

| Field | Type | Description |

|---|---|---|

| spec | Spec |

## Spec

| Field | Type | Description |

|---|---|---|

| exemptions | Exemptions[] | Policy exemptions |

### Exemptions

| Field | Type | Description |

|---|---|---|

| title | string | title to give the exemption for reporting purposes |

| description | string | Reasons as to why this exemption is needed |

| policies | Policies[] (enum):

| A list of policies to override |

| matcher | Matcher | Resource to exempt (Regex allowed for name) |

#### Matcher

-----

# Packages CR (v1alpha1)

| Field | Type | Description |

|---|---|---|

| namespace | string | |

| name | string | |

| kind | string (enum):

|

# Packages

| Field | Type | Description |

|---|---|---|

| spec | Spec |

## Spec

| Field | Type | Description |

|---|---|---|

| network | Network | Network configuration for the package |

| monitor | Monitor[] | Create Service or Pod Monitor configurations |

| sso | Sso[] | Create SSO client configurations |

| caBundle | CaBundle | CA bundle configuration for the package |

### Network

| Field | Type | Description |

|---|---|---|

| expose | Expose[] | Expose a service on an Istio Gateway |

| allow | Allow[] | Allow specific traffic (namespace will have a default-deny policy) |

| serviceMesh | ServiceMesh | Service Mesh configuration for the package |

#### Expose

| Field | Type | Description |

|---|---|---|

| description | string | A description of this expose entry, this will become part of the VirtualService name |

| host | string | The hostname to expose the service on |

| gateway | string | The name of the gateway to expose the service on (default: tenant) |

| domain | string | The domain to expose the service on, only valid for additional gateways (not tenant, admin, or passthrough) |

| service | string | The name of the service to expose |

| port | number | The port number to expose |

| selector | Selector for Pods targeted by the selected Services (so the NetworkPolicy can be generated correctly). | |

| targetPort | number | The service targetPort. This defaults to port and is only required if the service port is different from the target port (so the NetworkPolicy can be generated correctly). |

| advancedHTTP | AdvancedHTTP | Advanced HTTP settings for the route. |

| match | Match[] | Match the incoming request based on custom rules. Not permitted when using the passthrough gateway. |

| podLabels | Deprecated: use selector | |

| uptime | Uptime | Uptime monitoring configuration for this exposed service. Presence of checks.paths enables monitoring. |

##### AdvancedHTTP

| Field | Type | Description |

|---|---|---|

| corsPolicy | CorsPolicy | Cross-Origin Resource Sharing policy (CORS). |

| directResponse | DirectResponse | A HTTP rule can either return a direct_response, redirect or forward (default) traffic. |

| headers | Headers | |

| match | Match[] | Match the incoming request based on custom rules. Not permitted when using the passthrough gateway. |

| rewrite | Rewrite | Rewrite HTTP URIs and Authority headers. |

| redirect | Redirect | A HTTP rule can either return a direct_response, redirect or forward (default) traffic. |

| retries | Retries | Retry policy for HTTP requests. |

| weight | integer | Weight specifies the relative proportion of traffic to be forwarded to the destination. |

| timeout | string | Timeout for HTTP requests, default is disabled. |

###### CorsPolicy

| Field | Type | Description |

|---|---|---|

| allowCredentials | boolean | Indicates whether the caller is allowed to send the actual request (not the preflight) using credentials. |

| allowHeaders | string[] | List of HTTP headers that can be used when requesting the resource. |

| allowMethods | string[] | List of HTTP methods allowed to access the resource. |

| allowOrigin | string[] | |

| allowOrigins | AllowOrigins[] | String patterns that match allowed origins. |

| exposeHeaders | string[] | A list of HTTP headers that the browsers are allowed to access. |

| maxAge | string | Specifies how long the results of a preflight request can be cached. |

###### AllowOrigins

| Field | Type | Description |

|---|---|---|

| exact | string | |

| prefix | string | |

| regex | string | RE2 style regex-based match (https://github.com/google/re2/wiki/Syntax). |

###### DirectResponse

| Field | Type | Description |

|---|---|---|

| body | Body | Specifies the content of the response body. |

###### Body

| Field | Type | Description |

|---|---|---|

| bytes | string | response body as base64 encoded bytes. |

| string | string |

###### Request

| Field | Type | Description |

|---|---|---|

| add | ||

| remove | string[] | |

| set |

###### Response

| Field | Type | Description |

|---|---|---|

| add | ||

| remove | string[] | |

| set |

###### Match

| Field | Type | Description |

|---|---|---|

| ignoreUriCase | boolean | Flag to specify whether the URI matching should be case-insensitive. |

| method | Method | |

| name | string | The name assigned to a match. |

| queryParams | Query parameters for matching. | |

| uri | Uri |

###### Method

| Field | Type | Description |

|---|---|---|

| exact | string | |

| prefix | string | |

| regex | string | RE2 style regex-based match (https://github.com/google/re2/wiki/Syntax). |

###### Uri

| Field | Type | Description |

|---|---|---|

| exact | string | |

| prefix | string | |

| regex | string | RE2 style regex-based match (https://github.com/google/re2/wiki/Syntax). |

###### Rewrite

| Field | Type | Description |

|---|---|---|

| authority | string | rewrite the Authority/Host header with this value. |

| uri | string | rewrite the path (or the prefix) portion of the URI with this value. |

| uriRegexRewrite | UriRegexRewrite | rewrite the path portion of the URI with the specified regex. |

###### UriRegexRewrite

| Field | Type | Description |

|---|---|---|

| match | string | RE2 style regex-based match (https://github.com/google/re2/wiki/Syntax). |

| rewrite | string | The string that should replace into matching portions of original URI. |

###### Redirect

| Field | Type | Description |

|---|---|---|

| authority | string | On a redirect, overwrite the Authority/Host portion of the URL with this value. |

| port | integer | On a redirect, overwrite the port portion of the URL with this value. |

| derivePort | string (enum):

| On a redirect, dynamically set the port: * FROM_PROTOCOL_DEFAULT: automatically set to 80 for HTTP and 443 for HTTPS. Valid Options: FROM_PROTOCOL_DEFAULT, FROM_REQUEST_PORT |

| redirectCode | integer | On a redirect, Specifies the HTTP status code to use in the redirect response. |

| scheme | string | On a redirect, overwrite the scheme portion of the URL with this value. |

| uri | string | On a redirect, overwrite the Path portion of the URL with this value. |

###### Retries

| Field | Type | Description |

|---|---|---|

| attempts | integer | Number of retries to be allowed for a given request. |

| perTryTimeout | string | Timeout per attempt for a given request, including the initial call and any retries. |